Imagine browsing through a competitor’s website and suddenly noticing a service package that looks almost identical to one your organization developed internally. The structure, features, and even the pricing model seem oddly familiar. These were details meant to remain confidential, known only within your team. So how did they end up in the open?

The surprising truth is that the leak might not have come from a hacker or corporate espionage. It could have come from a silly reason- the way we casually utilize modern AI tools.

A 2025 survey in Elon University concluded that more than half of the AI users use at least two AI models while about 45% of them submitting prompts that were proprietary and sensitive. Many business owners and entrepreneurs fall victim to this phenomenon, often unaware of how easily their information can be exposed when using AI tools. Without proper precautions, sensitive details can unintentionally be shared or accessed by others.

Real-World AI Data Leak Examples

There have been real-life cases where leakages happened or users being put at risk for having their private information misused by others. Here are a few of them:

- In 2023, engineers from a mobile electronics company shared confidential semiconductor source code and internal program data to an AI tool, which eventually raised data confidentiality concerns and the company ended up banning the AI usage among employees.

- In 2025, a security incident involving a third-party analytics provider resulted in the exposure of limited user data related to the API platform. Although the breach did not involve prompts or AI model data, it exposed information such as customer details connected to the platform’s analytics systems.

- In 2026, a news reported by Times of India also stated that hackers used AI tools to help craft phishing emails and automate parts of a cyberattack that led to the theft of 150GB of confidential data from the Mexican government. The AI tools themselves were not breached, but they were used to accelerate the attack and bypass security filters.

So, How Does a Leakage Occur?

A basic and common way of using AI is by providing prompts and allowing the system to generate responses continuously. However, users sometimes include sensitive data in those prompts to provide better context. This may involve information such as intellectual property, customer information, financial data, legal documents, and more.

In addition, some users have gone as far as pasting production database dumps into AI chat interfaces, using AI plugins without checking their permissions, connecting AI tools directly to company systems, or allowing AI tools to access cloud drives without proper safeguards.

Those are the major mistakes users often make that opens doors to uninvited breaches of privacy through situations as below:

- Prompt data stored by AI providers

- Training data reuse (in some systems)

- Misconfigured AI integrations

- Shared AI chat histories

- AI plugins accessing external tools

- Cloud storage exposure

How to Enjoy AI Without Risk?

To benefit from AI tools without compromising sensitive information, users must adopt responsible practices when interacting with these systems. Since most AI platforms operate on cloud infrastructure, any data shared through prompts, uploads, or integrations may leave the organization’s-controlled environment.

The following steps will allow users to use AI tools more safely and responsibly:

- Avoid sensitive information– remove names, emails, IDs, API keys, Credentials, internal URLs, or any secrets that are not meant to be shared with the public.

- Mask data– replace sensitive information with placeholders. For instance, ‘Customer Name to Customer A’ and ‘Company Name to Company X’.

- Use enterprise versions– Most enterprise versions offer higher privacy protections where data aren’t used for training, encryption’s stronger, access is well-controlled, and audit logs are included as well.

- Disable Data Sharing and History– Turn off training sharing on settings and erase conversation history whenever possible.

- Use AI Through APIs– APIs are safer than chat interfaces, especially for developers, with more controlled environments, automated anonymization, security gateways, and monitoring tools.

- Use AI Sandboxes or Internal AI– Large companies may deploy internal AI assistants, private LLMs, and local AI models for more advanced compliance and full data control.

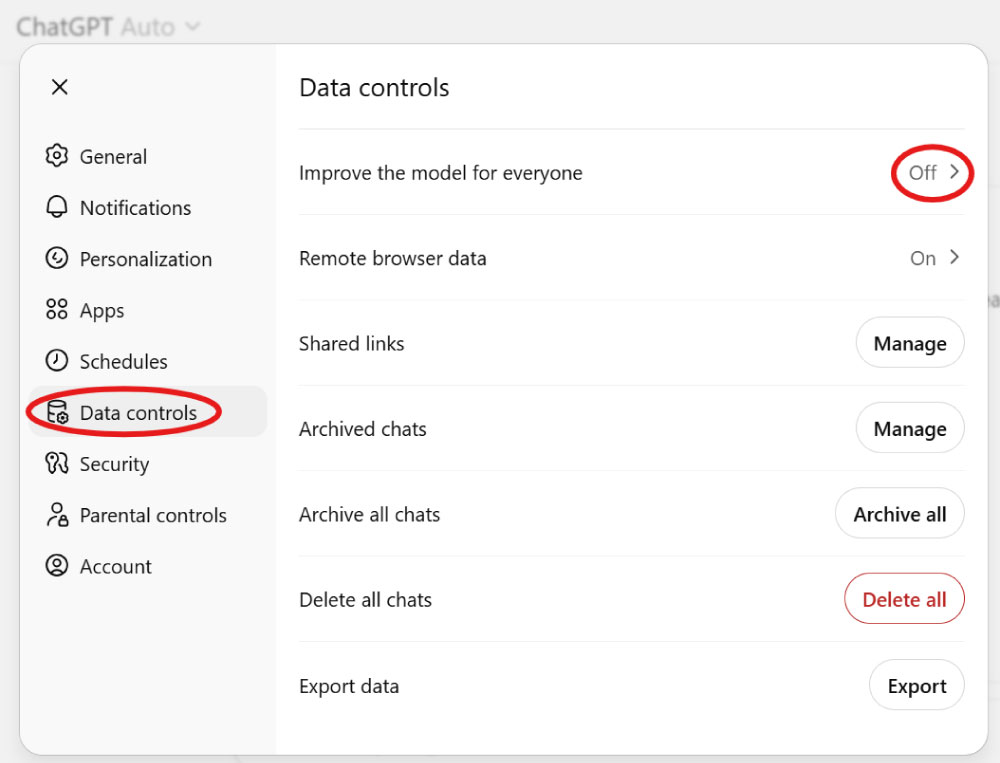

Managing Data Controls in AI Tools

Typically, many online platforms allow users to manage how their data is stored, used, and retained. Taking an extra step to get these settings checked is crucial in reducing the risk of sensitive information being stored or used for model training. Some popular AI tools, namely ChatGPT and Google Gemini, allow users to control their data by adjusting settings as follow:

ChatGPT: Go to ‘Settings’- find ‘Data Controls’- Turn off ‘Improve the model for everyone’ (or similar wording depending on the interface).

When this setting is disabled, all conversations will still be processed to generate responses but will not be used to train future versions of the model. Users can also review or delete past conversations from their chat history for additional control.

Google Gemini: Go to ‘Settings’- select ‘Gemini Apps Activity’- disable activity tracking.

Users can also review and delete their Gemini activity through their ‘Google Activity Controls’, which helps prevent long-term storage of sensitive prompts.

A Quick Checklist and What Lies Ahead

Before entering any information into an AI tool, it helps to pause and run through a quick mental checklist. Ask yourself whether the data contains confidential company information, personal identifiers, intellectual property, or anything that could cause harm if they were exposed publicly. If the answer is yes, the safest approach is to avoid sharing them or anonymize the details first. Developing this habit encourages more responsible AI usage and helps prevent accidental exposure of sensitive information.

As AI adoption continues to grow, organizations and technology providers are increasingly focusing on privacy-first approaches. New developments such as private AI deployments, on-premises language models, and stronger data governance controls are helping businesses benefit from AI while keeping sensitive information protected. Moving forward, the key to successful AI adoption will not only be innovation, but also the ability to balance productivity with strong data privacy practices.